I recently bumped on a post by François on FieldByName performance, and was bit surprised by the magnitude of speedups reported by Marco Cantu in a comment:

” I keep fixing similar code I see my clients use, and in some case the performance can increase 5 to 10 times, for large loops. Good you are raising this problem. ”

We have similar-looking code being used here in our datasets (which aren’t TDataset-related at all however), and yet, repeatedly looking up fields by name isn’t a performance issue (it hardly registers in the profiling results, even in worst case situations, like in-memory SQLite DBs).

By curiosity, I had a look at DB.pas… Suffice it to say that the VCL code make a good case study of why a Profiler is your friend, and what “out of thin air” optimization can lead to.

The FindField case of Unicode comparisons

The DB.pas code being copyrighted, I won’t post any excerpts here, but you can find it easily enough yourself, so I’ll just describe what happens.

FindField’s purpose is to find a TField by its name, in a case-insensitive fashion.

A naive implementation would thus look like that:

for i := 0 to FFields.Count-1 do begin

Result := FFields[i];

if AnsiCompareText(Result.Name, FieldName) = 0 then

Exit;

end;

Result := nil;

I then made a quick benchmark, consisting of a three cases:

- “best” case consists in finding the first field

- “worst” case consists in finding the 20th field

- “all” case consists in finding 20 fields out of the 20 fields

Field names were like “MyFieldName1”, “MyFieldName2”, etc. up to “MyFieldName20”. You’ll note that the differencing characters are at the end of the string, so the situation is quite unfavorable in terms of string comparisons, but neutral if you were to hash those strings f.i.

Also keep in mind I’ve just got a recent CPU (at the date of writing), and the timings afterward are for 100k lookups. On a regular end-user machine, you could probably double or quadruple the figures.

With the naive implementation, the “best” case performance is 19 ms, “worst” case 400 ms, and “all” is 210 ms.

This is quite lengthy, as with Unicode, case insensitive comparison (AnsiCompareText) is quite complex and expensive time-wise. There can be an obvious performance issue with FindField if used in a loop.

Case study of an optimization gone wrong

To cut down on that complexity, the VCL implementors chose to go for a hash. A risky choice to begin with: a hash has to be good enough to limit collisions, it has to be computed fast enough to actually bring a benefit, and last but not least, it results in sometimes complex extra code (and here you need a case insensitive hash, a plain old binary hash won’t do).

So the VCL code goes on to compute a hash for each of the fields, and alters the naive implementation above by checking the hash before performing the AnsiCompareText, doing the comparison only the hash matches. So far so good, eh? Well, here the trouble begins.

First, the VCL code is still facing one AnsiCompareText per FindField hit, plus an AnsiUpperCase (which is almost as expensive) to compute the hash.

Second, the TNamedItem.HashName implementation is a collection of “don’t”, look for yourself in the code:

- it includes a custom GetStringLength inline, to cut down on the access to the string character count probably, access which happens twice in the implementation (and which a profiling would have revealed to be negligible, especially in light of the following)

- since the introduction of FrankenStrings, a String can hold Ansi as well as UTF16, and the hash is computed on an UpperCase’d UTF-16, so you’ve got extra conversion code in there, in case it is Ansi, including dynamic allocation to serve as buffer for UnicodeFromLocaleChars, which is invoked no less than twice

- in case the String is already UTF-16, a buffer is still dynamically allocated, and the AnsiUpperCase’d name copied to it, I guess that’s to preserve the “efficiency” of the loop that computes the actual hash…

- then comes the hash computation loop, that was obviously where the optimization effort went, it works on a PChar, does a ROL and XOR, and it is certainly efficient, except that a mere profiling would have shown its efficiency didn’t matter, unless your field names are several hundred characters long…

The VCL implementation has a “best” case performance of 42 ms (2 times slower than naive), a “worst” case of 50 ms (8 times faster than naive), and “all” of 46ms (5 times faster than naive).

Thing is, you likely won’t often have 20 fields in your queries, and the VCL implementation needs at least 3 fields to pull ahead of the naive implementation. Given the size and complexity of the VCL code involved, I would say that’s quite an under-achievement.

Last but not least, if you profile the VCL code, you’ll see that HashName, a whole bunch of memory allocation and string management code from System.pas are quite stressed, given the above, that’s not too surprising, but that means performance in a multi-threaded situation will only get worse.

Doing it the efficient way

Let’s do it with the help of a profiler, and a bit of laziness.

Initially AnsiCompareText is the obvious, overwhelming culprit the profiler will point to in the naive implementation, there are two roads from that point:

- optimizing AnsiCompareText, this is complex, involves quite a bit of code, and we’re lazy, remember?

- the fastest AnsiCompareText is the one you don’t do, that’s the lazy road.

How to not do the AnsiCompareText?

One reason there are so many of them in the first place is that there is a loop on the fields. And when optimizing, loops are good, they mean you’ve got big O optimization potential, and big O optimization is how you achieve orders of magnitude speedups.

In this case, it’s a simple O(n) string search loop, for which one of the classic optimizations is a reduction to O(ln n) using binary search. That however requires an ordered list, and the Fields list isn’t sorted, and can’t be sorted.

So we need a good old fashioned index.

One such readily available index is good old TStringList, with Sorted set to True: place the field names in the Strings[], the TField object in the Objects[]. Use IndexOf() to find a field. That’s all. You have reduced the AnsiCompareText from O(n) to O(ln n).

// after filling up or altering the Fields FIndex.Clear; // FIndex assumed created, set to sorted, case insensitive for i := 0 to FFields.Count-1 do FIndex.AddObject( FFields[i].Name, FFields[i] ); ... // Find a field with i := FIndex.IndexOf( fieldName ); if i >=0 then field := TField( FIndex.Objects[i] ) else field := nil;

With the above code, on a 20 fields situation, the best/worst/all cases benchmarks around 78 ms, which is coherent with the O(ln n) expectation (there are no best or worst case).

A quick look in the profile reveals AnsiCompareText is still the overwhelming bottleneck, instead of being the one in our code, it’s now the one in the TStringList.CompareStrings. The profiler tells us the AnsiCompareText is the key, no need to worry about optimizing Length() 😉

How to go further from there?

We are O(ln n), could we go to O(1)? That would involve a hash list, which means a hash, a case-insensitive hash. We don’t have one handy, they’re complex, this is not lazy. Also a hash list can be costly memory-wise, we don’t want a huge setup for what could be a two-fields-dataset affair in practice.

The fastest AnsiCompareText is the one you don’t do… so, do we really need AnsiCompareText? No.

Why? Because we only really need to index the names that are looked up, and FindField is troublesome when it’s invoked in a loop, looking up the very same name strings again and again, ad nauseum.

Rather than indexing the field names, we can index the field names that are actually looked up, but this time in a case sensitive TStringList, thus changing our index to a cache of sorts:

// after filling up or altering the Fields

FIndex.Clear; // FIndex assumed created, set to sorted, case sensitive

...

// Find a field with

k:=FIndex.IndexOf(FieldName);

if k>=0 then

Result:=TField(FIndex.Objects[k])

else begin

// not in index, find it with naive implementation

Result:=nil;

for i:=0 to FFields.Count-1 do begin

Result:=FFields[i];

if AnsiCompareText(Result.Name, FieldName) = 0 then

Break;

end;

// add to index

FIndex.AddObject(FieldName, Result);

end;

After benchmarking, however, there is no speedup… A look at the profiling results shows that TStringList.CompareStrings is still the bottleneck, this time because of AnsiCompareStr… is there no end to them slow Unicode functions???

Why is AnsiCompareStr slow? in part because in Unicode the same character can be encoded differently, and in part because the WinAPI implementation is just plain no good.

In our case however, the Unicode encodings details don’t matter, it’s a cache, the ordering is meaningless as long as it is consistent, so we can just subclass TStringList and override CompareStrings:

function TIndexStringList.CompareStrings(const S1, S2: string): Integer; begin Result:=CompareStr(S1, S2); end;

In Delphi XE, CompareStr() is fast, it is still based on Pierre le Riche’s FastCode version (but who knows what will happen to it in Delphi 64 where there is no BASM? but I digress…).

Wrapping it up

The new benchmark figures are now around 8 ms, in all cases. That’s five times faster than the VCL’s best case with a 20 fields dataset, and it scales nicely with field counts, as we’re still O(ln n). Just to illustrate:

- with 3 fields: VCL takes 42 to 45 ms, our code 4 to 5.6 ms

- with 100 fields: VCL takes 42 to 81 ms, our code 10 to 18 ms.

This is also achieved with a lot less code than the VCL, no asm or fancy tricks, and we achieved speedups of the same magnitude as what Marco Cantu reported, what François and all TDataset users have to labor for by optimizing on the spot every time they have a loop on a dataset.

Is there some more fat to be trimmed?

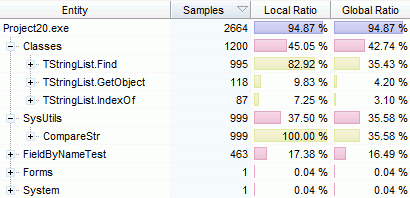

The final profiling of the benchmark look like that:

16.5% are lost to the overhead (a simple loop that calls FindField repeatedly), we can assume CompareStr is optimal enough, the rest is spent in TStringList methods which are decently implemented.

16.5% are lost to the overhead (a simple loop that calls FindField repeatedly), we can assume CompareStr is optimal enough, the rest is spent in TStringList methods which are decently implemented.

As a bonus, you’ll notice there is no noteworthy stress on dynamic memory allocation, meaning things will scale all the better when multi-threading.

As an alternative to TStringList, you could wonder about the new generic TDictionary<>, if you’re feeling adventurous.

However, you wouldn’t be rewarded for your risk, as it’s slower than the solution exposed here (up to 5 times slower when there are few fields, and slightly slower when there are hundreds of fields). A look at the profiler shows it wastes most of its time… computing the hash. Its memory overhead is also quite higher and would probably bite in a multi-thread situation (I’ll let the astute reader figure out why the TStringList approach gets away with very little memory allocation).

The optimization is done.

You could of course go further, there are a couple low hanging percents to grab, but to really improve, it would involve libraries or extra code that would go beyond the scope of an isolated optimization case such as this one, IMHO.

Very interesting work, Eric! Good job.

It’s worth noting, though, that under Fair Use doctrine, you’re explicitly allowed to copy and republish small snippets of a copyrighted work for the purposes of commentary or critique. (IANAL, of course.)

A very good justification as why the standard FieldByName as used by the majority of Delphi developers should be avoided “a priori”.

Now a question: have you tried and measured with the same cases when taking the FieldByName out of the loop, with either local variables or persistent Field objects?

You said that “when optimizing, loops are good…”, I would still say that one of the best way to optimize loops is to remove them or to remove as much as possible from the loop, which is exactly what you are doing by removing the AnsiCompareXX routines.

Why not directly remove FieldByName/FindField altogether when possible?

After all, nobody in their right mind would use a TEdit by repetedly doing a FindComponent in its owner Form, would they?

> it includes a custom GetStringLength inline

I can explain that one. Length(UniS) where UniS holds an AnsiString, will convert the string to a real UnicodeString with memory allocation, CPU locks and a MultiByteToWideChar call. But this is something the function handles itself. It would spent time converting it twice. But with Delphi XE where this $STRINGCHECKS crap was removed, the function could have been simplified. But I guess nobody knew about this special handling anymore.

Be careful to simplify string compare operations. English is not the only language on the planet.

Because optimizing is all about focusing on actual bottlenecks, and not on possible ones. That’s the error made by the vcl implementors with FindField, they focused on imagined complexity, so their code ends up more complex and less efficient at the same time. In your queries, if FieldByName is no longer a bottleneck, then you’ll be better off investing code in fixing the actual bottleneck, rather than add complexity with little measurable effect.

It’s a classic danger with optimization recipes: one shouldn’t forget that they’re optimization recipes, and so should be made use of only when necessary. This is also why fast base classes and libraries is important: what is handled correctly doesn’t need to be worked around.

I’m well aware of that, and the optimization actually supports that, look closely, the non-unicode check is made where it doesn’t affect Unicode or Ansi locales checks. It’s when looking up the index, not the fieldname. (hint: case-insensitive “duplicates” are accepted by the index, even though they’ll likely be rarities in practice)

I guess that kludge had to bite them back somehow. Next the frankenstrings will probably bite them too, assuming they haven’t already.

@Eric

Frankenstrings – I like this definition (if I understand it correctly. 🙂 ).

The new UnicodeString with the build-in ?odepage field was a bad architecture decision.

@Kryvich

It was a decision to allow C++Builder developers to migrate their projects to unicode without actually migrating them. But I can’t tell you one C++Builder user who went this non-migration path.

@Eric

Did you benchmark TObjectDictionary compared to TStringList? My benchmarks indicate that a class I am working on (based on TObjectDictionary) outperform TStringList by a factor of two even at small numbers (6), and that the divergence grows as you increase the number of items, Note that this assumes that you create the dictionary once, and reuse it a large number of times.

Yes, that is for case sensitive sorted stringlist with the fix mentionned in the article. Under that condition, I’m seeing a 5 to 1 advantage for stringlist on small counts, and TDictionnary only gets closer with very high counts (but doesn’t get faster, at least up to a few hundred entries). I haven’t benched in a multithreading situation, but my guess is that stringlist would pull further ahead because of the reduced memory pressure. That’s valid only for strings of a couple dozen characters though, if your strings get larger, the hash strategy can pull ahead if the first chars of the strings aren’t discriminating.

Vanilla stringlist with case sensitive is no good though, as I said in the article.

@Eric I’ll post the benchmarks and source code to my blog during the weekend. It uses 6 or 12 strings in the list or dictionary, all shorter than 10 chars, but it has a single create/fill and half a million lookups, and for me the dictionary is twice as fast as the string list. I wonder what the difference between my usage and your usage is? Could it be 16-byte boundary issues that play a trick here?

Dunno, when I profiled, the TDictionnary bottleneck was its hash function, it took longer to hash than for the stringlist to find a match. Anyway, the answer will be in the profiling 🙂

Posted: http://delphi.fosdal.com/2010/12/generic-cache.html

@Lars Fosdal

Your TStringList implementation doesn’t make use of the fix mentioned here (overriding CompareStrings), make use of it, and your benchmark results will change 😉

“I’ll let the astute reader figure out why the TStringList approach gets away with very little memory allocation”

Just a quick guess – the strings are never modified, rather just added to the internal TStrings array, so the string reference counting in Delphi results in just moving around string pointers instead of the entire string buffers (no FastMM calls, no contention, etc.).

I sometimes wonder how much we take the reference-counted strings in Delphi for granted. They’re the epitome of “lazy” – they put off doing any actual “work” until it is absolutely necessary. 🙂